A little-examined consequence of the predatory publishing phenomenon is the damage done to legitimate publishers that got swept up in it – not because they were truly predatory, but because they were listed alongside journals that were. Beall’s List, the well-intentioned and perhaps the most famous predatory publisher and journal list, has long been shuttered. … Continue reading Accusations frozen in time: How Beall’s List still hurts publishers in 2026

Out of time

This week, hundreds of librarians, publishers, and vendors have congregated in the UK city of Glasgow to attend the annual UK Serials Group (UKSG). A fixture on the scholarly communications events circuit for many years, there was a clear sense this year that things are under strain, and a once vibrant industry is going through … Continue reading Out of time

Cabells launches white paper on optimizing journal decision-making at UKSG

BEAUMONT, TX: Cabells – a US-based information services company – has launched a white paper (available for download below) providing insights to researchers, librarians, and aligned professionals on how its Journalytics platform can optimize crucial decision-making. Working from prior research underlining the importance of decision-making when it comes to choosing the right journal for a … Continue reading Cabells launches white paper on optimizing journal decision-making at UKSG

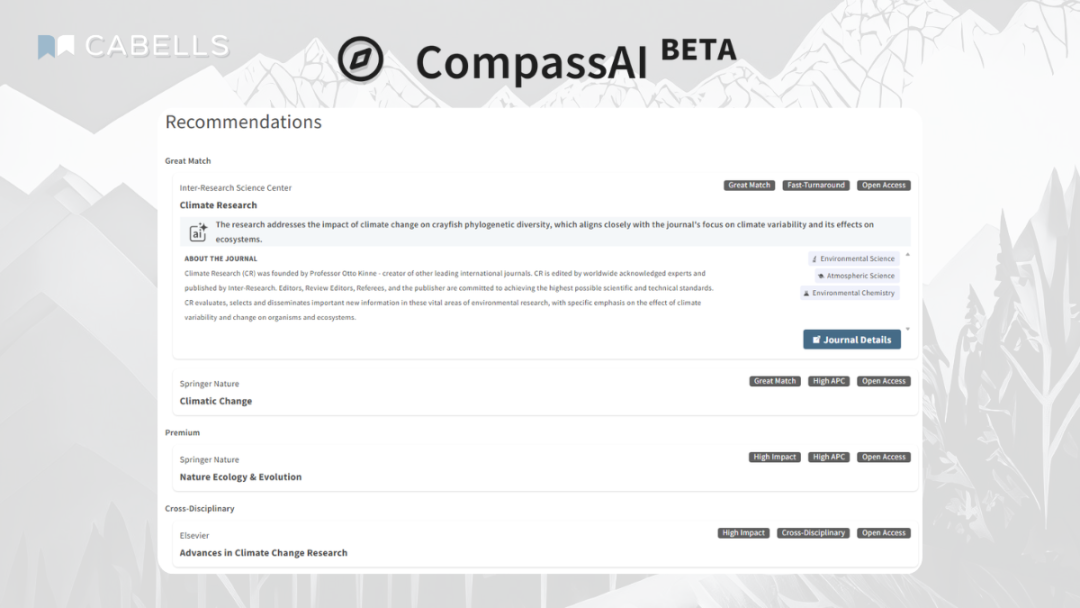

Finding your compass

When US Secretary of Defense Donald Rumsfeld uttered his now-famous speech about what we know and don’t know in the early 2000s, it is fair to say there was a good deal of mockery. Not only did it sound extremely odd, it also didn’t seem to make sense initially. What on earth are ‘known unknowns’ … Continue reading Finding your compass

Next-level decisions

After several years in development, Cabells launches its new Journalytics STEM product today, offering the same next-level journal data as its companion Academic and Medicine products to support the best possible decision-making across STEM subjects. It’s taken time to put together Journalytics STEM simply because there are a lot of journals to review, curate, and assess in this field, with well over 7,000 journals included across science, … Continue reading Next-level decisions

Cabells launches Journalytics STEM – verified journal database to optimize publishing decisions

FOR IMMEDIATE RELEASEBEAUMONT, TX: Cabells – a US-based information services company – is launching Journalytics STEM, the latest in its suite of powerful and trusted database products to support researchers and institutions in optimizing decision-making for publications. With Journalytics STEM, Cabells has taken the successful formula it has developed with Journalytics Academic and Journalytics Medicine … Continue reading Cabells launches Journalytics STEM – verified journal database to optimize publishing decisions

Cabells’ Predatory Reports database hits 20,000 deceptive journals

FOR IMMEDIATE RELEASE BEAUMONT, TX: Cabells – a US-based information services company – now includes over 20,000 journals in its Predatory Reports database, with the unique resource growing by over 300% since its launch in 2017. After hitting the 10,000 mark in 2019 and 15,000 in 2021, a recent upgrade in the technology governing the … Continue reading Cabells’ Predatory Reports database hits 20,000 deceptive journals

Who can you trust?

Think back, if you can, 25 years ago to January 2001, and what do you remember? To jog your memory, that month saw the inauguration of George W. Bush as U.S. President, the launch of iTunes from Apple, and the appointment of the England football team’s first foreign manager, Sven-Göran Eriksson. While you sit there thinking how old you feel, this will make you … Continue reading Who can you trust?

Ghosts in the machine

Breaching research integrity is often regarded as, at worst, a white-collar crime reserved for nerdy types who couldn’t quite cut it intellectually; at best, it’s not even regarded at all – it is simply invisible to most people as they go about their lives. However, this may be about to change with the release of a new documentary that may bring the problem … Continue reading Ghosts in the machine

Land of make believe

A Happy New Year to everyone, and if you can’t quite believe it is January already, then recent news on the impact of AI on scholarly communications is not going to help with that feeling of things not being quite real. Towards the end of 2025, reports started to emerge of 'imaginary journals.' We have … Continue reading Land of make believe