When US Secretary of Defense Donald Rumsfeld uttered his now-famous speech about what we know and don’t know in the early 2000s, it is fair to say there was a good deal of mockery. Not only did it sound extremely odd, it also didn’t seem to make sense initially. What on earth are ‘known unknowns’ and what possible use is something that we don’t know?

It is fair to say, judging by how many times you hear it quoted (and misquoted), that the speech has matured well with age. In its original formulation or ‘matrix,’ Rumsfeld ran through ‘known knowns’ (that which we know and can verify), unknown unknowns (that which we don’t know and never will do), and known unknowns (that which we don’t know, but we know to exist). An example of this can be a calculated risk, such as a cancelled flight – it’s possible your flight could be cancelled, and the risk can increase due to certain events, but you don’t know it will happen.

Worth the risk?

Pondering these types of questions and plotting a way through them to make optimal decisions is known as decision science. One would think that focusing on decision-making would be a high-profile and well-articulated skill; however, it appears to remain a rather obscure branch of the management disciplines. Focusing on a range of quantitative techniques, it includes several core skills such as risk analysis, cost-effectiveness, simulated modelling, and behavioral decision theory. It is by nature multidisciplinary, encompassing economics, psychology, statistics, management control, and, increasingly, computer science.

Decision sciences have been criticised for being rather mundane, in that the nature of the evidence at hand or the impact of decisions that have been made in the past are where the real interest lies. However, decision-making takes up almost every waking moment of our day, from deciding what to eat for breakfast to which book to read before sleep. And when the decisions that need to be made are potentially career-defining – like which journal to publish your research in – then understanding how that process can be optimized can hardly have greater weight.

One direction

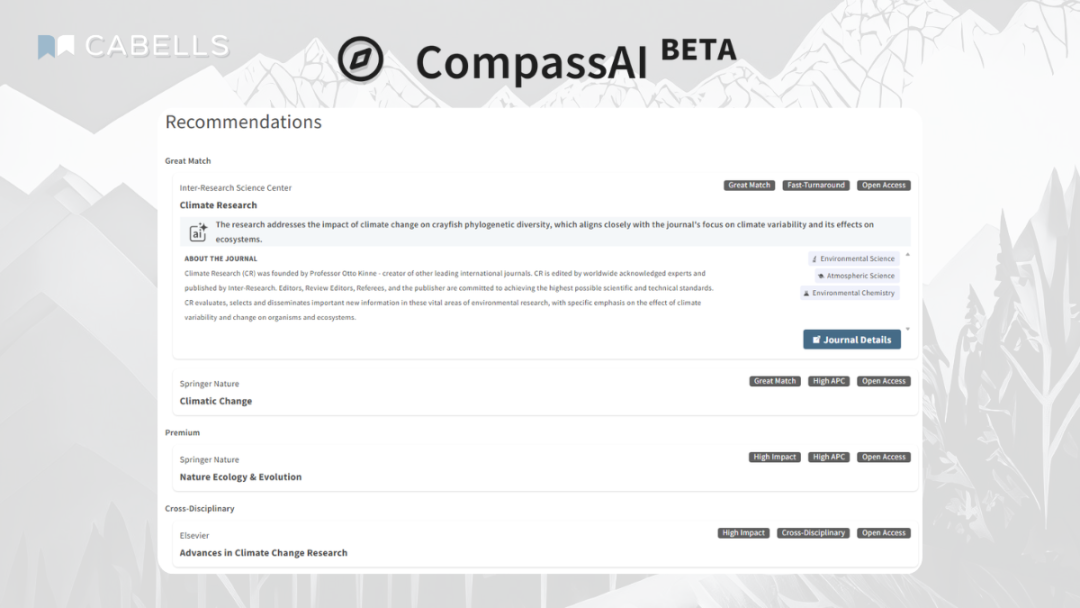

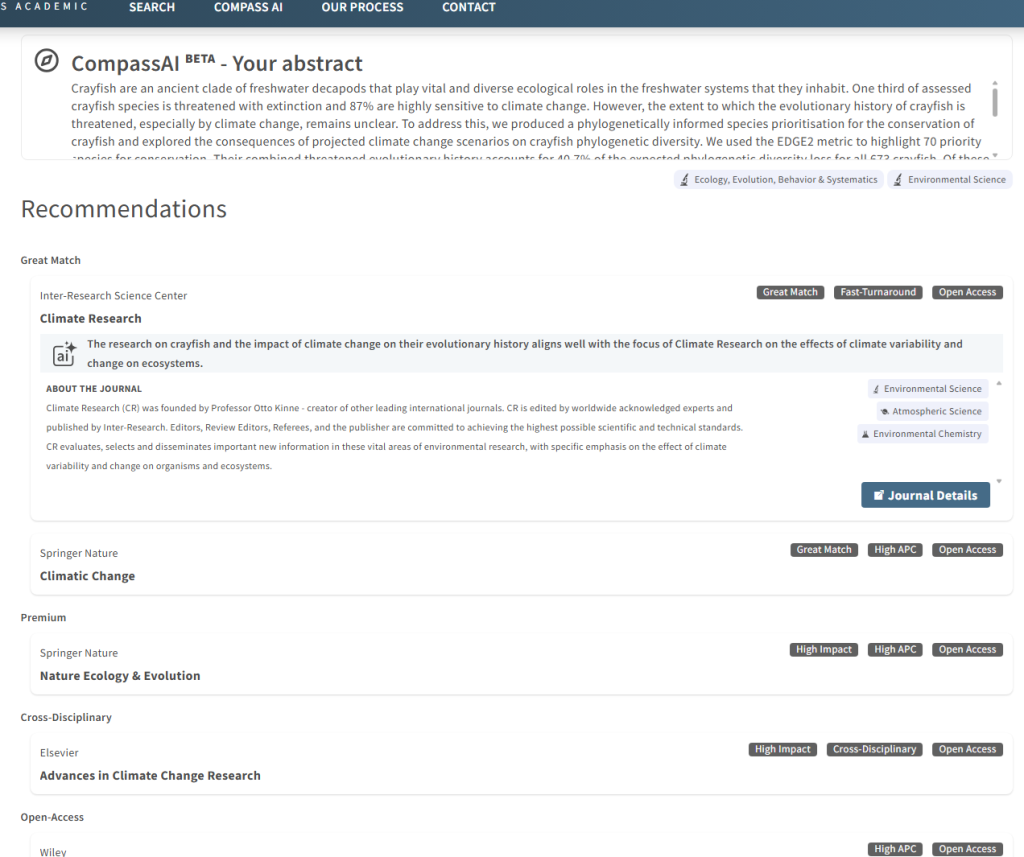

The fact that the academic and publishing news is littered with details about research integrity breaches, predatory journal scandals, and mass retractions shows that, at the very least, a lot of decision-making has been going wrong somewhere. This is one of the reasons Cabells launched and developed its CompassAI tool, which is now available to all customers of its Journalytics products.

CompassAI builds on the huge range of data points that exist in the Journalytics database against each journal, such as time to publication, Altmetric data, and acceptance rates. Users can compare and contrast these data points, but it can be difficult and time-consuming to do this for each of these across a number of journals, with the added issue of assessing if the scope of a given journal is the right fit for your research. CompassAI takes your abstract – but any introduction or summary can suffice – and matches it to the tens of thousands of journals in our database.

This approach – assessing available data, synthesizing journal scopes, and eliminating predatory journals – is highly aligned with decision science best practice. And the restricted use of AI within known parameters also enhances its technical power without the risk of fabrication. Choosing journals has never been so fraught with the sheer number of them in existence and the nefarious actors around seeking to disrupt things, but the decision-making process has at least been given a nudge in the right direction.