How do you choose the right journal? Some people might suggest this is an art rather than a science, only possible with years of publishing experience or a wide network of contacts of those at the top of a given field. Others might suggest that you can plan and execute a publication with near certainty, using data to proceed to a positive outcome.

The truth, as with all of these things, is a mixture of ‘it depends’ and ‘a bit of both.’ One tactic that will improve your chances to no end, though, is something I have included in every one of the literally hundreds of talks and webinars I have given on publishing strategy. It sounds easy, but it requires habit and a curious-minded approach. And it can transform the way you think about journal choices.

Simply put: research your research.

Missing link

What on earth does this mean? Well, a common denominator of all researchers is that they are highly skilled at researching. And another is that they all want to publish that research in a journal. But less often do they put those skills to good use by researching the journals they would like to publish in the same depth that they do in their chosen field. If they had as much knowledge of the container for their research as they do for the research itself, they would be much more likely to make a good choice of journals to publish in.

Two cases in point have come up recently where a detailed trawl of relevant social media channels could help any would-be author identify the right journal for them. The first example was on LinkedIn – probably the most useful platform for academic news, products, and services – where an author posted about a recent publishing scam they had uncovered regarding citation manipulation. The trick was as follows:

- The author in question saw a paper posted on SSRN that looked similar to their own preprint on arXiv, as if an AI had been asked to rewrite it

- What was different from the original paper was that some newspapers had been added to the reference section

- The additional references went to certain papers and were also included in a host of other preprints, creating a serious volume of citations to a handful of papers

- The author posted their experience on LinkedIn so others could be aware of the scam.

Bluesky thinking

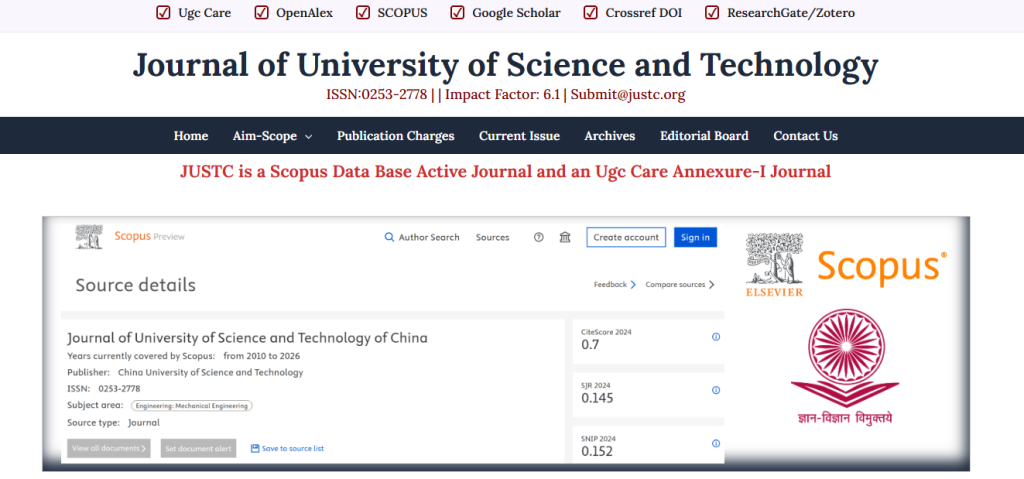

On another platform, there was a flag raised about a journal due to some suspicious email activity. An experienced academic there had received a communication from a journal and wondered aloud to their followers if it was legit. The problem he spotted was that while the journal checked out that they were indexed by Scopus as they claimed, they did not have an Impact Factor as they had advertised on their homepage.

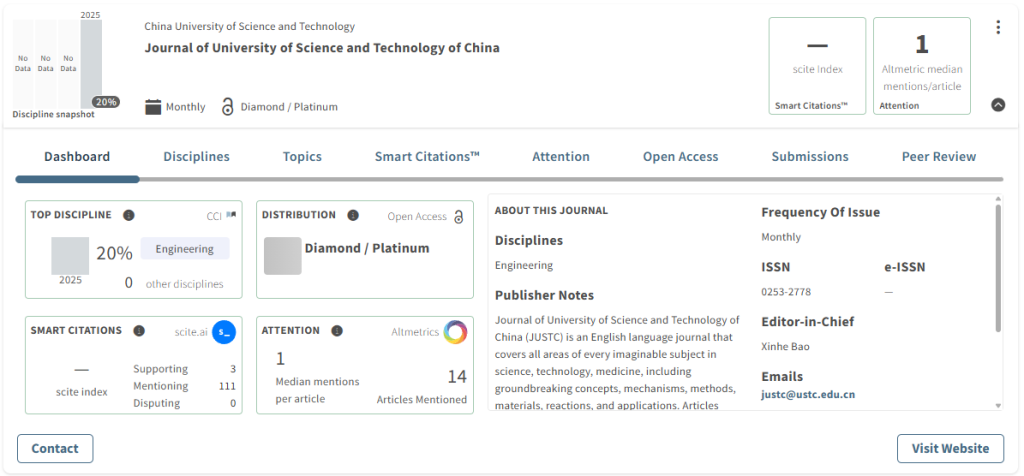

Cabells also indexes this journal, but a couple of things stood out – the journal we indexed had a different name (with ‘of China’ at the end of the title), and legitimate journals rarely announce their indexation so obviously as this journal, with the Impact Factor of 6.1 at the top and a screengrab from the Scopus website. Additionally, the journal’s recorded CiteScore was 0.7 – it is highly unlikely, if not impossible, for any journal to have an IF of 6.1 with such a CiteScore given the similar way they are calculated.

Sure enough, after some checks, it was clear that the homepage in question was fake, with the original having been hijacked. This shows that a little sleuthing into where you publish can do wonders for the impact you might have with what you choose to publish in a journal.