Imagine you wake up one morning with a set of unusual symptoms. Nothing to worry about, you think, but you had better check online just to make sure. Once you scroll through a few plausible-sounding, non-serious possibilities, your eyes alight on an illness you have never heard of before. But it sounds serious – really serious. You click on the link and the academic article you read then sends you into a blind panic as you think you might have a hitherto unknown condition…

Welcome to the world of AI-driven fake diseases.

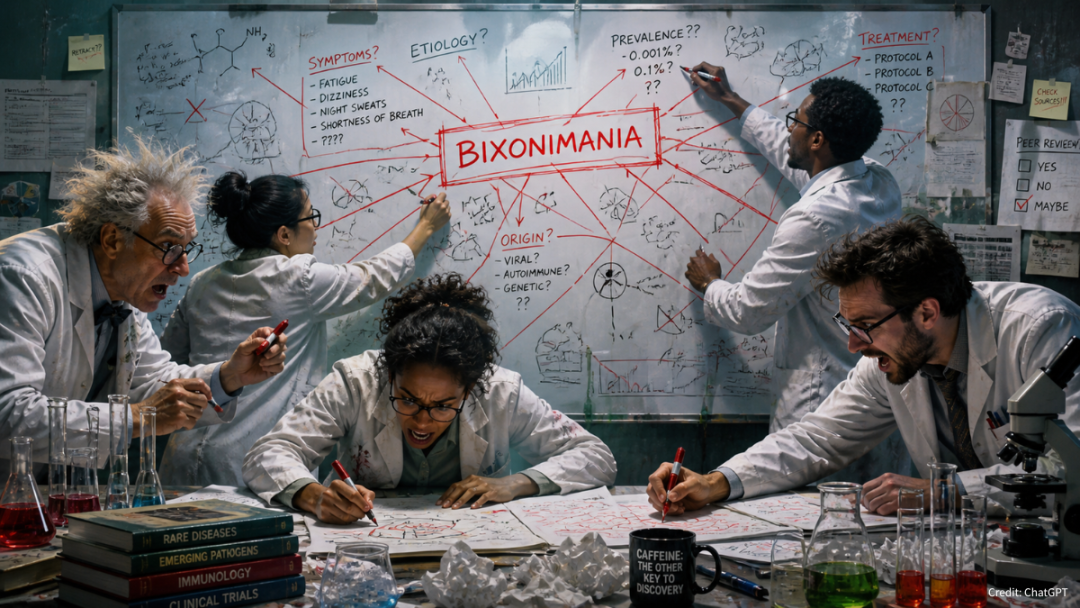

It sounds like a dystopian nightmare or the work of science fiction, but unfortunately, we are starting to see fake diseases appear online and in journals as a result of AI use. A recent article in Nature has highlighted the issue, citing the example of ‘Bixonimania’ – a disease made up by Swedish scholars and posted on an article repository as an experiment to see if the large language models (LLMs) that drive generative AI would pick up on them as legitimate. As Nature reports, the experiment worked a little too well and has seen the condition regurgitated countless times as people perform searches including the symptoms.

Caveat emptor

Many of us have easily made the transition from using Google as our first port of call for any kind of scientific search – whether research-related or just for our own curiosity – to AI in some form, whether that be the handy AI summaries Google now provides above the top links it finds, or as part of a dialogue with a chatbot. We have already seen in this blog the ongoing issue publishers face with the ‘Google zero’ phenomenon, and it seems that we have more unintended consequences in the shape of fake diseases.

What is interesting for our industry and research as a whole is that it was pretty clear to any researcher reading the papers that were originally planted that they were fake – lines in the text flat out stated as such, and if you made it as far as the acknowledgments you would have read one thanking someone at Starfleet Command, with funding provided by the ‘Professor Sideshow Bob Foundation’. Despite these pretty obvious tells, AI bots picked up the academic-looking information and ingested it to the extent that all the major AIs not only included details in response to queries, but also invented new details, such as their prevalence.

Growing problem

This example is sadly not an isolated one. A similarly dark tale has been relayed by one Canadian man who spent days going deeper and deeper into a rabbit hole, being seemingly egged on by one chatbot, which convinced him he had invented a new branch of mathematics called chronoarithemtics. Not only does no such thing exist, but the vain deep dive also led to the man seeking counselling once he realized the whole experience had been fake.

Thanks to the websites cited above and discussions on social media, the profile of this issue has been raised, and one would hope brought to the attention of the AI companies in question. But as some comments on social media have identified, these occurrences raise some very troubling questions about the veracity of the research we see, overlaying issues we already recognize, such as predatory journals and paper mills:

- The posted articles were subsequently published in ‘real’ journals, thus finding their way into peer-reviewed journals and polluting the scholarly record more deeply, despite the original papers being retracted

- The issue here is not so much making up details, it is the evidence that they understand academic formatting as a proxy for credible research – and if that is the case, they will have already swallowed predatory journal articles hook, line, and sinker

- As more articles cite the original or the subsequent citing articles, they will also get ingested into the LLMs, as well as regurgitated on social media, thus spreading the problem more widely

- These cases also provide evidence that researchers are not reading the original text, a bad habit made worse by the prevalence of AI, and another symptom of the ‘Google zero’ effect

- Finally, the problem of preprint servers not performing peer review but their articles being treated as having had such checks has been well made, but many do perform basic checks, including for AI anomalies. However, this paper was still posted and shared widely (albeit it has now been taken down).

Many commentators on the use of AI in research ask the question: Where do you draw the line? What these and other issues seem to suggest is that the question itself is now futile: not only have innumerable lines already been crossed, but the idea of a line itself is now almost impossible to imagine.