Recent reporting from the respected Dutch newspaper de Volkskrant highlights a growing problem in scholarly publishing: the rise of AI-generated fake citations appearing in academic papers. According to the article, authored by Stan van Pelt, fabricated references — studies, articles, or journals that do not actually exist — have increased significantly alongside the widespread adoption … Continue reading Study highlights AI-fueled increase in references to fake sources

Posts

What’s in a name?

Imagine you wake up one morning with a set of unusual symptoms. Nothing to worry about, you think, but you had better check online just to make sure. Once you scroll through a few plausible-sounding, non-serious possibilities, your eyes alight on an illness you have never heard of before. But it sounds serious – really serious. You click on the link … Continue reading What’s in a name?

Research? It’s a social enterprise

How do you choose the right journal? Some people might suggest this is an art rather than a science, only possible with years of publishing experience or a wide network of contacts of those at the top of a given field. Others might suggest that you can plan and execute a publication with near certainty, using data to proceed to a positive outcome. The truth, as with all … Continue reading Research? It’s a social enterprise

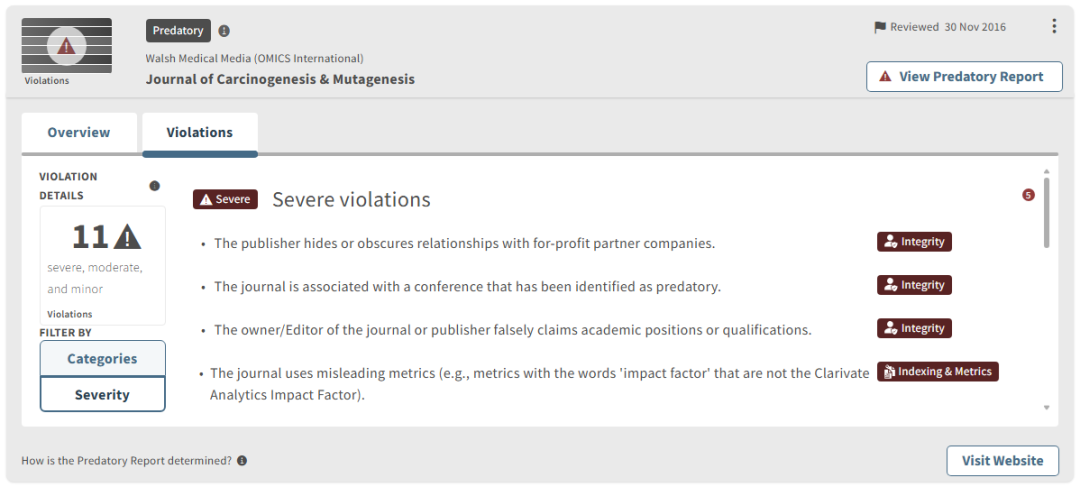

Accusations frozen in time: How Beall’s List still hurts publishers in 2026

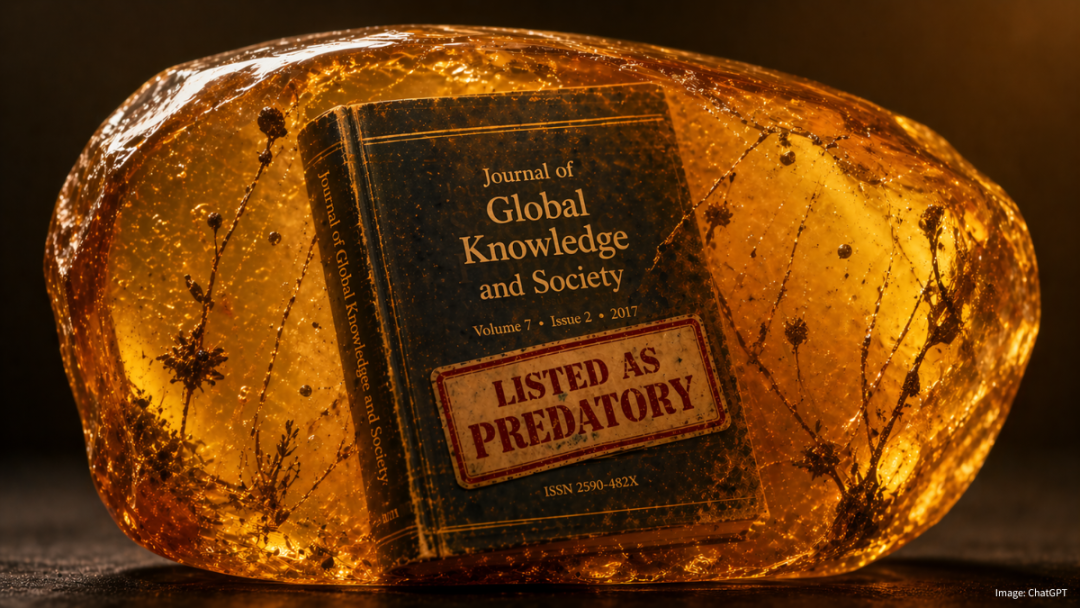

A little-examined consequence of the predatory publishing phenomenon is the damage done to legitimate publishers that got swept up in it – not because they were truly predatory, but because they were listed alongside journals that were. Beall’s List, the well-intentioned and perhaps the most famous predatory publisher and journal list, has long been shuttered. … Continue reading Accusations frozen in time: How Beall’s List still hurts publishers in 2026

Letters from America: AI

The news from business school leaders meeting in Seattle this week was simple: AACSB + ICAM = AI. To translate, it was the Association to Advance Collegiate Schools of Business (AACSB) annual get-together, known as the International Conference and Meetings (ICAM), and the focus was very much on Artificial Intelligence. And by focus, while the … Continue reading Letters from America: AI

Hijack!

A question Cabells is often asked about the coverage of journals in its database products is, ‘How do you find out about them?’ This is not an easy question to answer, as there are so many reasons for a journal to come to our attention, for good or bad – editor recommendation, new launch, author … Continue reading Hijack!

Out of time

This week, hundreds of librarians, publishers, and vendors have congregated in the UK city of Glasgow to attend the annual UK Serials Group (UKSG). A fixture on the scholarly communications events circuit for many years, there was a clear sense this year that things are under strain, and a once vibrant industry is going through … Continue reading Out of time

Cabells launches white paper on optimizing journal decision-making at UKSG

BEAUMONT, TX: Cabells – a US-based information services company – has launched a white paper (available for download below) providing insights to researchers, librarians, and aligned professionals on how its Journalytics platform can optimize crucial decision-making. Working from prior research underlining the importance of decision-making when it comes to choosing the right journal for a … Continue reading Cabells launches white paper on optimizing journal decision-making at UKSG

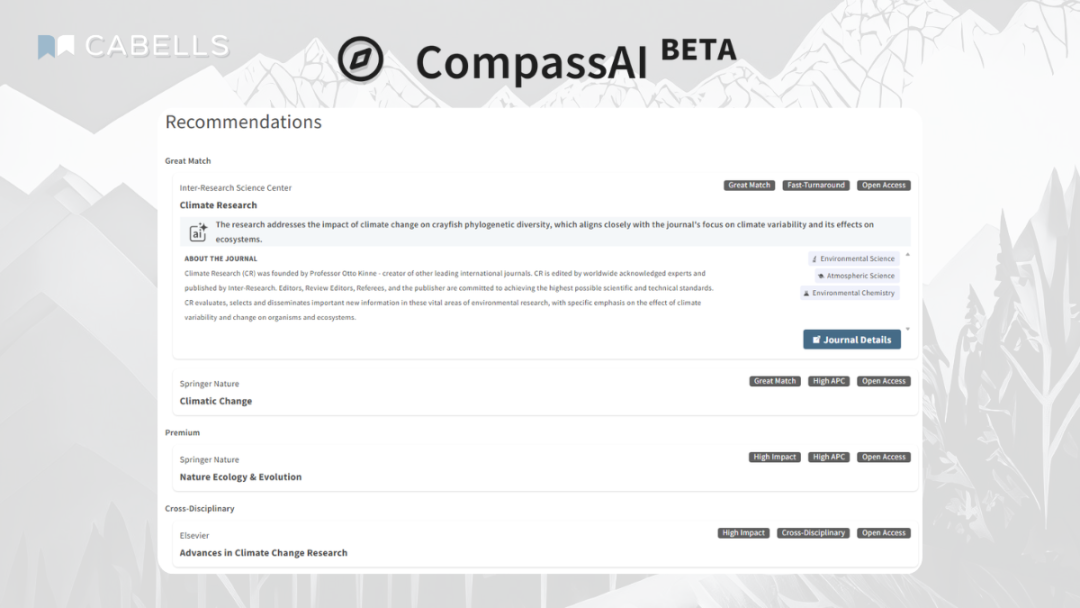

Finding your compass

When US Secretary of Defense Donald Rumsfeld uttered his now-famous speech about what we know and don’t know in the early 2000s, it is fair to say there was a good deal of mockery. Not only did it sound extremely odd, it also didn’t seem to make sense initially. What on earth are ‘known unknowns’ … Continue reading Finding your compass

Look before you leap

Einstein defined madness as doing the same thing again and again while expecting a different result. Current world events may lend support to this particular theory, but it is certainly not a new phenomenon. Indeed, one of the wisest lessons for authors is more than 2,500 years old. Aesop was believed to have been a … Continue reading Look before you leap